Musical Artist recognition

Musical Artist Recognition

The Problem:

We wanted to construct a model that will attempt to identify the artist of a given song from audio features alone. Humans can intuitively classify music into genres and recognize artists from hearing just seconds of a song. The importance of this task is to analyze whether audio features alone are sufficient in classifying music at or above a human level. Many music platforms (Spotify, Pandora, etc..) utilize user preferences to link similar artists together for recommendation purposes; the outcome of this project will determine whether audio features could also be helpful in the task.

Our Solution:

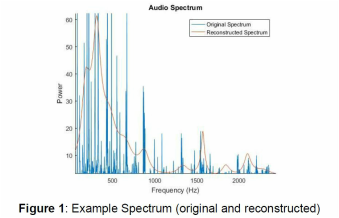

We used linear predictive coding (LPC) coefficients to build our main feature set. LPC coefficients can represent the spectral envelope of a signal in a compressed form, hence their popularity in speech processing applications. As seen in Figure 1, the reconstructed spectrum found using LPC coefficients roughly follows the shape of the original spectrum, compressing the representation while maintaining the necessary information . We trained a multiclass support vector machine (SVM) for our classification using the Statistics and Machine Learning Toolbox in Matlab. While SVM's are binary classifiers, training multiple classifiers and combining the results can yield a classifier that can output more than two labels.

Testing:

1265 songs from 32 different musical artists were collected for this project. The artists were categorized into different genres: electronic, pop, rap, and rock. The songs also spanned a variety of decades, ranging from 1960's rock and roll to modern dance music. The dataset was split such that the model was trained on 75% of the songs and tested using the remaining 25%. Accuracies for the 32 class artist classification problem as well as for the 4 class genre classification problem were determined.

Results:

Using the LPC coefficients of 32 sampled 1 second portions of audio from a song, the artist and genre classification accuracies were 16.26% and 49.51%, respectively. We changed a number of parameters of the feature set, including numbers of portions, length of portions, and sections of songs being sampled; however, these changes only improved the accuracy by a small margin: the maximum artist classification accuracy was 21.92% and maximum genre classification accuracy was 50.49%. This suggests that the algorithm may be invariant to such modifications.